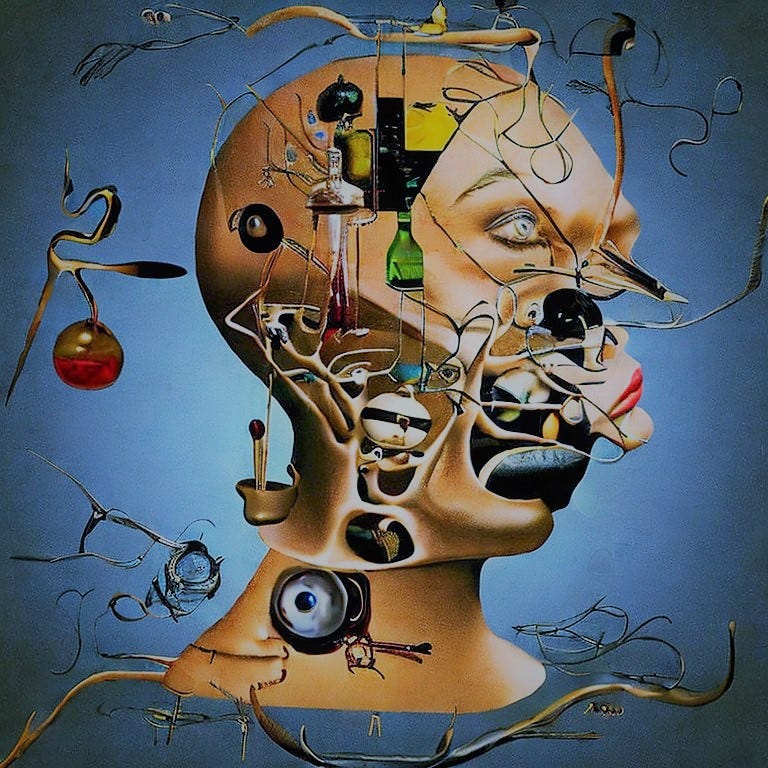

The weight of a human brain is close to three pounds. It is around sixty percent fat, largely in the form of insulation for the nerve axons, the brain’s electrical connectors, like the plastic coating on a copper wire. That’s what makes up the white matter of the cortex — countless bundles of sheathed axons linking nerve cells in one part of the brain to those in another — while the grey matter is a mass of cell bodies and uncoated connections. There are just over a hundred billion nerve cells, or neurons, in the human brain and central nervous system. They are of around two hundred different types, supporting different numbers of connectors and sporting different shapes and different electrical capacities.

These billions of neurons of two hundred different types are then outnumbered by various other types of cells in the brain offering support functions such as creating the insulation, acting as scaffolding, delivering nutrients from the blood supply and spraying the neurons with an ever-changing cocktail of proteins to regulate the speed of electrical transmission between them. The complexity is overwhelming. And none of it, it turns out, matters a lot.

To host a mind, all you need are the neurons. Everything else is there only to have an effect on the flow of charge between them, and that can all be modelled in a way that emerges from what the neurons do. I mentioned that there are a couple of hundred different types of neuron. To look at, this is true, but physically and biochemically they all do the same thing. All that matters in the end is the flow of electrical charge. Some types of neuron can support greater numbers of connections than others; some can reach further to touch others in more remote locations; but they all do the same job, and it is a relatively simple one.

Ripples in Rain

A memory? A thought? An emotion? An idea? All these things are constellations of neurons firing more or less together in your head, as charge flows from one to the next along a route carved by previous firings. But all those neurons are doing the same job as one another. There’s nothing special about any particular cell; there is, however, something very special about the connections between them. Those ever-evolving connections, the patterns of charge flowing across millions of cells in your brain and nervous system: they are you.

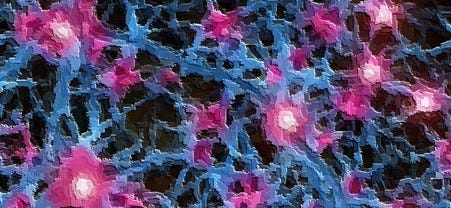

One could argue, in an enjoyably circular act of anthropomorphism, that neurons have the sole purpose of relaying electrical potential, the synapses by which one neuron passes charge to another outnumbering the neurons themselves more than a thousand-fold. So while there are less than a hundred billion neurons in a human brain, there are over a hundred trillion primary synapses and many more secondary ones. An individual neuron can send charge, via these synapses, to any number of other neurons, and it continually reassesses which others to send charge to and what proportion to send to each, based upon which of its neighbours and existing contacts have been most active at the same time and which are exuding particular chemicals at just the right moment. Just as a neuron can send charge to any number of other neurons, it can receive charge from any number of other neurons that have extended a connection to it. Each of these may send it some charge, and however many of them fire within a few milliseconds of one another, all sending some of their charge to our receiving neuron, it will itself fire only if the net effect of all that received charge exceeds a certain activation threshold.

Neural activation, and the function that determines it, is a hot topic in AI research, but while the mechanism that causes a neuron to fire may not yet be precisely understood, it is surely understandable. It doesn't require any new physics. Equally unclear but not unintelligible is the process by which firing together makes neurons more likely to fire together again: the phenomenon we call learning. It is clearly more sophisticated than fire together, wire together, and AI researchers attempt to be more sophisticated, with techniques such as back-propagation and stochastic gradient descent, but nothing has yet successfully modelled learning in a brain. The mathematics of biology and the mathematics of biologists rely on different tricks, as Roger Penrose has long speculated.

So we have two small mysteries still to solve:

How are the weighted inputs of all the synapses passing charge to a neuron evaluated to make a net decision whether the neuron fires or not?

Precisely what factors influence a neuron to make new synapses, redistribute charge among its existing ones and prune those connections no longer needed?

Aside these small gaps in knowledge, though, that seems to be all there is to it. That is a brain. All the macro structures we find when we dissect one, and all the different types of neurons, glials and other cells that we find inside - none of that really matters. Evolution doesn't achieve things in the most straightforward way. That stuff's just substrate. It all influences the moving patterns of charge that constitute a mind but there's nothing else going on as a result. There is no ghost or shadow-IT in the machine. Just patterns of moving electrical energy lighting up constellations of discharge in an endless succession of fleeting moments. That's you. That's me. That’s us.

The Blind Matchmaker

To reproduce fairly faithfully the behaviour of a neuron takes just under a hundred lines of code and up to five kilobytes of storage. One hundred lines of code, almost all concerned with logging which other neurons are connected to this one and strengthening or weakening those connections as firing patterns evolve. You write the program only once. Even I can write a hundred lines of code inside an hour. Load it into memory and you have a working neuron. Load it into memory a hundred billion times and you can have a brain. That requires about a petabyte of memory. All we needed, we thought back in the nineties, were computers fast enough and storage cheap enough and this could be within reach. We could create a working brain, or at least a decent model of one. Oh, the disappointment when we discovered that, while a brain we could indeed have, it still wouldn't be a mind. For without input, all you have is substrate; without training, there is no learning; and there is such a fantastic amount of learning to be done.

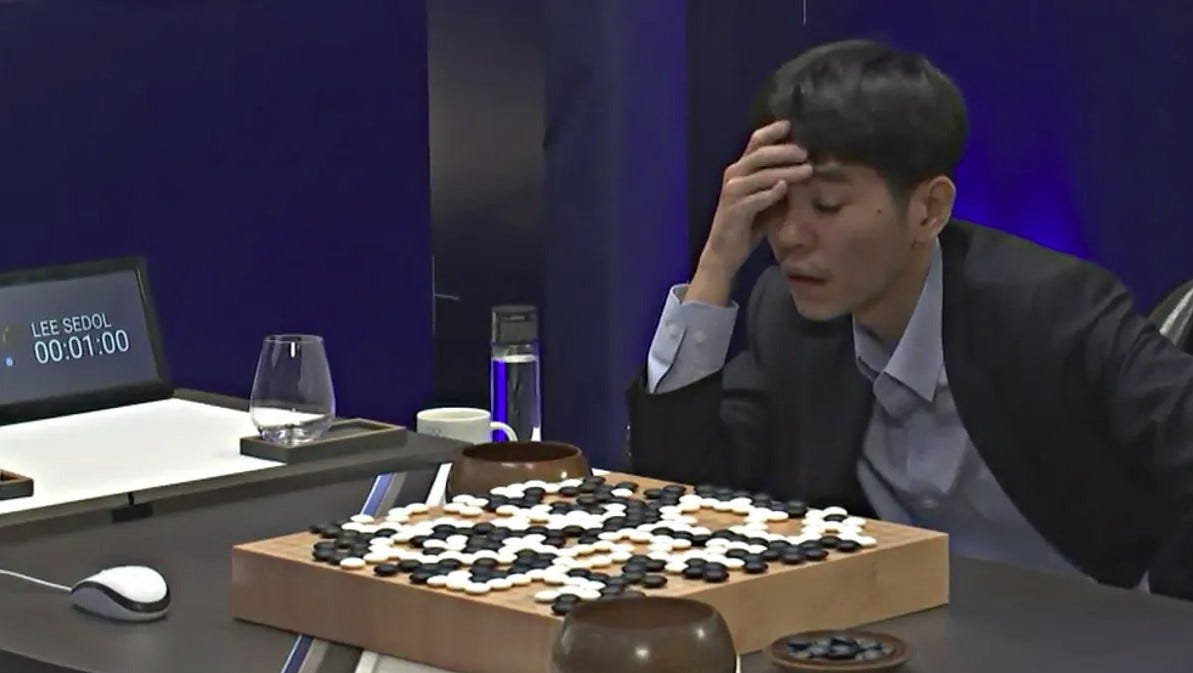

Big Blue’s triumph at Chess and Watson’s at Jeopardy relied almost entirely on the speed and capacity of modern computers to beat their human rivals. Neither was a triumph of artificial intelligence so much as a demonstration that Chess and Jeopardy are tests of computational speed and memory rather than intelligence. Ten years ago, a new approach to AI became a more potent threat.

Risk and Reinforcement

Though convolutional neural networks were originally conceived in the 1980s, it was only in the last decade or so that computing power has reached a level capable of hosting them at scale. These networks have many layers of artificial neurons between input and output, possibly as many as several hundred, and progressive layers recognise increasingly sophisticated objects in the data by passing their conclusions onwards to be assembled, correlated and classified. Rather than truly modelling a network of neurons, they perform simple maths on vast arrays of numbers. Such an artificial neural network is not really a neural network at all. There are no shifting constellations of charge; there are only numbers, lots of numbers. The term deep learning was coined for this type of processing, and it is proving extremely successful at an increasing number of tasks.

AlphaGo, DeepMind’s deep reinforcement learning network which defeated world Go champion Lee Sedol in 2016, truly learned continually, and it invented a new winning strategy unknown in the history of the game. Chinese scholars of Go marvelled at the system’s ingenuity. Google went on to develop AlphaZero, a blank-slate AI that can play any game without knowing the rules or even knowing that it is a game. It plays initially terribly but it’s riding an asymptotic learning curve until it develops winning strategies that exceed any developed by humans. The network learns not by comparing its output with external training data but from its own data generated by the previous version of itself. So each iteration creates training data for the next, and each set becomes more challenging than the last. With each bout of self-play, competing against the skills of its earlier self, the system learns to become a stronger game player but it also learns how to learn more effectively.

Dreams and False Alarms

In the summer of 2015, Alexander Mordvintsev of Google Labs took a convolutional neural network that had been trained on visual data and ran it in reverse. Having dramatically downscaled the source images, he fed them back along the network with a gradient ascent to maximise the activation of some of the neural layers. The network had been originally intended as a means of identifying particular objects, such as prams or clouds or people, in any image; Alexander used it to upscale the images he had downscaled, and in attempting to restore the lost detail the network began to hallucinate some of the objects it had been taught to recognise. At the University of Sussex, Keisuke Suzuki and Anil Seth took this a little further. They used a network that had been taught to recognise dogs, fed it a video of the main square in the university campus and told it there were dogs in the footage. It saw dogs everywhere and generated images that are, to my mind, frankly scary.

Bear in mind that these errors were a deliberately orchestrated result of the poor resolution of the downscaled images. Often, though, we make the same kinds of mistakes with our own vision. As Seth points out, we are all hallucinating all of the time; when we agree about our hallucinations, we call that reality. Perception is not so much an act of labelling and categorising the environment as one of projecting hypotheses on to it and testing them by comparing what we think is out there with the information our senses are feeding us. That new understanding was a breakthrough not just in phenomenology and cognitive research; the door was now wide open for the development of Generative AI.

The Art of Noise

A year earlier, Ian Goodfellow, having just joined Google Brain, had developed an idea to pit two convolutional neural networks against each other. One, having been trained on a particular kind of data, would attempt to generate new examples of a similar kind, while the other would study the results, blended with the original training set, and aim to work out which were original and which generated (in other words, classify them as either real or fake). The two networks, the generator and the discriminator, would compete with each other in a zero-sum game, each attempting to maximise its score at the other’s expense and thus challenging it to optimise itself. Researchers at the university of Amsterdam took this a little further, again pitting one network against another but asking the first to act as an encoder by reducing the source data to an essential representative sample or model; the second network then acts as a decoder, which tries to reproduce the original from the model and works with the encoder to perfect that model over an ever-increasing pool of potential data.

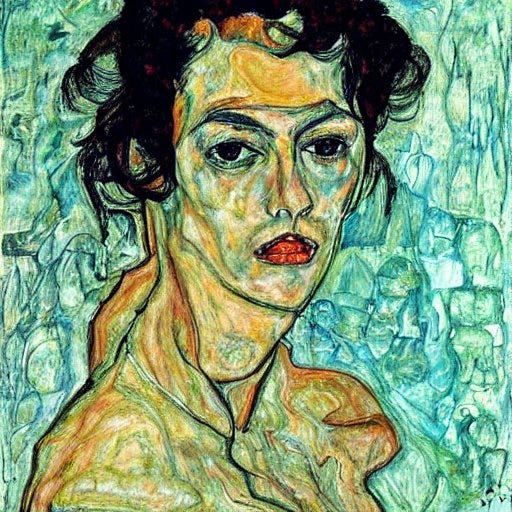

One immensely successful variant of this approach involves an encoder that gradually adds random noise to the training data, noting the changes at each step so that the decoder can cleanly remove all the noise but learn the features of the original data along the way. Applying the decoder to new random noise can generate novel content that is nonetheless clear, because the model has learned the kinds of objects and patterns to expect. Mid-journey, Stable Diffusion and Dall-E all make use of these Denoising Diffusion Probabilistic Models to fabricate images that have never existed before, giving us such impossibilities as Egon Schiele’s portrait of Monica Bellucci.

Fear and Fortitude

The achievement of AI systems like this is bewildering and seems truly creative but there is still a chasm between this kind of system and us. There are people out there who may tell you that they are on the verge of creating a consciousness within their AI systems. Nine out of ten of them are just plain wrong, and the other guy is lying.

Though the last architectural breakthrough in AI design was in 2015, the runaway success of generative AI in the creative arts since then has reignited public interest in the field. Naturally, concerns over the ethics and dangers of the technology have also increased, and calls to curb AI development are not uncommon. After all, just because you can make a thing, it doesn’t mean that you should; it is likely, however, that before long somebody will. So should we be concerned?

Truly, I don’t think so. Nothing happening currently in DeepMind, OpenAI, Baidu or any of the other major players is getting any nearer to artificial sentience, nor is it likely to. Current generative models, powerful as they are, represent a blind alley on the route to hard AI, and the burst of momentum we’ve just seen in the field may shortly wane now that little further progress can be made in that direction. As Noam Chomsky remarked of ChatGPT, it’s a nice toy.

We will never construct an artificial conscious mind, no matter how close some companies will claim to be. And not because there is any magic to it. It’s just connections and patterns of charge, changing from one moment to the next. That’s all we are. The issue is how the majority of those connections got there in the first place. The big gap between AI and us is what amounts to our firmware.

Your mind is more than a hundred trillion connections and only some of them have emerged since you were conceived; most were programmed in from the start. A billion years of evolution have shaped your mind to be what it is, so that it comes preloaded with software in the form of predetermined weighted connections, or at least predetermined tendencies to form them in particular patterns. In other words, the majority of neural networks within your brain have already been programmed, and although you are constantly learning throughout your life, all the knowledge and experience you gain amounts to little more than fine tuning the mind that your genome creates. In fact, a baby has more synaptic connections than you do; the majority of early learning involves pruning or weakening synapses rather than growing new ones. In a way, adult intelligence emerges from chaotic noise. Even though your brain is still growing in size and sophistication until the age of twenty, half of the new connections that will form during those first twenty years of life are determined by your DNA, not your experiences.

Sense and Singularity

To create a mind, you cannot simply build a vast neural network, start teaching it things and hope that it will spontaneously develop consciousness. It will not. It may come to know things but it won’t know that it knows them. It won’t know what knowing feels like, it won’t truly have an opinion about whether it should know things, or whether you should. It won’t feel anything. It won’t really think. The software that makes all those phenomena possible is not something learned within an individual human lifetime; it is something bequeathed to you by three hundred million generations of ancestors and it took half a billion years of software patches to get to the current release of the complex attractor state that is you. That’s a hard act to repeat, and it’s far more than just maths. AI research will not achieve it any time soon. And that helps me to sleep at night.

Wow! I am amazed I am able to read and comprehend all of this fascinating information, yay brain!